LMNTRIX®

ALIGNS WITH GARTNER

The LMNTRIX Active Defense validated architecture was developed specifically to complement an organization’s existing defenses and enable a comprehensive adaptive security protection architecture as prescribed by Gartner.

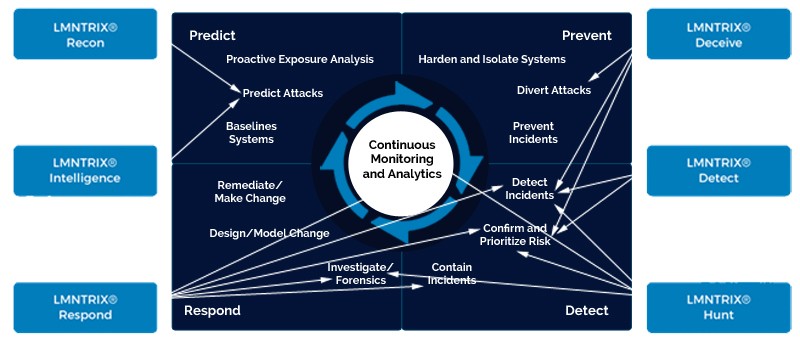

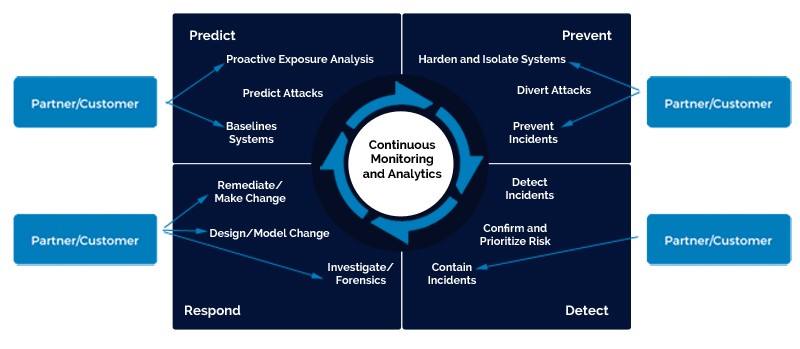

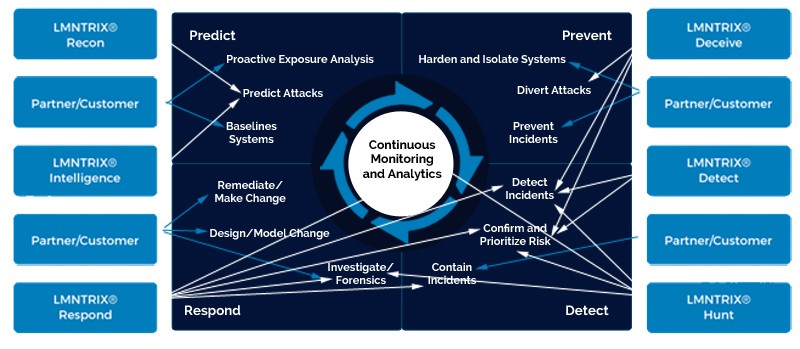

The following image depicts how LMNTRIX works with clients and partners to deliver on all 12 Gartner capabilities – those that are deemed necessary to augment an organization’s ability to block, prevent, detect and respond to attacks.

According to Gartner, reducing the surface area of attack is the core foundation of any successful security architecture. This is achieved through a combination of techniques which includes limiting a hacker’s ability to reach systems, identifying vulnerabilities to target and executing malware. Traditional application whitelisting – also known as “default deny” – is a powerful capability which falls into this category. This approach can be deployed at either the network firewall level (which only allows communication on a specified port/protocol) or the system application control level (which only allows specified applications to execute). With this in mind, data encryption can be thought of as a form of whitelisting, one which hardens defenses at the information level.

Other approaches which fall into this category include vulnerability and patch management which identifies and closes vulnerabilities as well as the emerging endpoint isolation and “sandboxing” techniques. These latter techniques proactively limit the ability of a particular process, system, application or network to interact with others.

LMNTRIX relies on its clients and/or partners to ensure systems are hardened and isolated.

It is no secret that attackers have one extreme advantage against organizations – time. This evolving category tries to address this advantage by wasting hackers’ time. This is achieved by masking legitimate systems and vulnerabilities through a variety of techniques including the the creation of fake systems, vulnerabilities and information which are used to lure and occupy the attacker. In and of itself, this “security through obscurity” approach is insufficient to protect an organization, but it does represent a critical plank in a layered, defense-in-depth protection strategy.

Not only does this technique waste hackers’ time, but it also allows the quick identification of attackers with high assurance. This is due to the fact that legitimate users have no reason to access the fake systems, vulnerabilities and information, allowing security teams to rapidly respond and prevent them from causing damage.

With LMNTRIX Deceive we deploy deceptions everywhere to divert attackers and change the asymmetry of cyber warfare by focusing on the weakest link in a targeted attack – the human team behind it. Targeted attacks are orchestrated by human teams, and humans are always vulnerable.

By weaving a deceptive layer over every endpoint, server and network component, an attacker is faced with a false world in which every bit of data cannot be trusted. If attackers are unable to collect reliable data, their ability to make decisions is negated and the attack is stopped in its tracks.

This category includes traditional “signature based” anti-malware scanning as well as network and host-based intrusion prevention systems – both well-established approaches which work to prevent hackers from gaining initial access to systems. “Behavioral signatures” can also be used to complement the traditional approaches by preventing systems from communicating with known command-and-control centers. Threat intelligence from third-party reputation feeds are central to the deployment of behavioral signatures, intelligence which is integrated into network, gateway or host-based controls. These feeds can also be integrated within a host which prevents one process from injecting itself into the memory space of another.

LMNTRIX Intelligence is one such reputation service feed that can be integrated with a client’s network, gateway or host-based controls, while the LMNTRIX Respond – Advanced Endpoint Threat Detection service is used for confirming infections quickly and blocking or quarantining with precision in real-time or through change control.

For other preventative measures, LMNTRIX collaborates with clients or partners to use existing network, gateway or host-based controls to prevent incidents detected by LMNTRIX.

The unfortunate truth is that some attacks will bypass traditional blocking and prevention mechanisms. When the inevitable occurs, it is critical that the intrusion is detected as quickly as possible in order to minimize the hacker’s ability to inflict material damage. While there are a variety of techniques that may be used to detect incidents, the majority of them rely on the analysis of data gathered by continuous monitoring at the adaptive protection architecture’s core . This analysis enables the detection of anomalies from normal patterns of behavior, the detection of outbound connections to known malicious entities, and the detection of sequences of events and behaviors that may be potential indictors of compromise.

LMNTRIX uses a combination of advanced network (LMNTRIX Detect) and endpoint threat detection (LMNTRIX Respond) capability, combined with deceptions everywhere (LMNTRIX Deceive) and continuous monitoring and hunting (LMNTRIX Hunt) to detect incidents that bypass perimeter controls. We do this without any reliance on clients’ existing perimeter controls and without any log collection.

By combining a thorough view of behavior on the endpoint (LMNTRIX Detect) with the rich set of data from network packets (LMNTRIX Detect and Hunt), our intrusion analysts can see and understand everything happening in the client environment and – within seconds – can investigate incidents down to the most granular detail in order to take the most appropriate action.

The first step after detecting a potential incident is to use correlating indicators of compromise to confirm its veracity. By drawing on all the intelligence at hand from across the client’s architecture — comparing feeds from a network-based threat detection system’s view of a sandboxed environment to what processes, behaviors and registry entries are being observed on actual endpoints – a potential incident can be confirmed swiftly and accurately. The next critical step is to prioritise the incident by using internal and external context — such as the user, their role, the sensitivity of the information being handled and the business value of the asset — in order to gauge the level of risk to the enterprise. Once prioritised, this can be visually presented to security operations analysts so they can focus on the highest-risk issues first.

Confirmation and risk prioritization are a natural part of the LMNTRIX Deceive, Detect, Respond and Hunt capabilities. In every case of the LMNTRIX Active Defense, when a breach is validated by our intrusion analysts, it is immediately reported to the client for any residual action on their behalf (such as blocking on their firewall or IPS).

Isolating the compromised system or account is critical once an incident has been identified, confirmed and prioritized. Commonly, this is achieved via containment capabilities such as network-level isolation, account lockout, endpoint containerization, killing a system process, and immediately preventing others from executing the malware or accessing the compromised content.

The LMNTRIX Respond advanced endpoint threat detection capability includes incident containment by identifying the exact location of malicious files for precise remediation. By identifying the exact location and persistence mechanism of malicious files, our intrusion analysts categorize a file as ‘blacklisted’. Defined as ‘blacklisted’, the intrusion analyst then blocks and quarantines the file. Once quarantined, a file may be deleted from the system. Blocking can be enabled from across the entire enterprise or to a select set of individual machines.

Once compromised systems or accounts have been isolated, retrospective analysis of the data gathered from continuous monitoring is required in order to determine the root cause and full scope of the breach. Not only is it essential to discover how the attacker gained a foothold in the enterprise, but it is also critical to ascertain whether an unknown or unpatched vulnerability was exploited, the number of systems that were impacted, and what specific data was exfiltrated. Detailed historical monitoring information is what allows a security analyst to answer these detailed questions as network flow data alone may be insufficient for a thorough investigation. It is the combination of advanced monitoring technologies – such as full network packet capture, endpoint system activity monitoring and advanced analytics tools – that enable a security analyst to answer these questions. It is also pivotal that new signatures/rules/patterns are run against historical data to see if the enterprise has already been targeted with this attack and has remained undetected.

The LMNTRIX Active Defense incorporates endpoint forensics (LMNTRIX Respond) and network forensics (LMNTRIX Hunt) while through our partners we deliver onsite forensics capability.

With LMNTRIX Respond, our intrusion analysts can pull full process and memory dumps, view the Master File Table (MFT), and see all modified/deleted files while with LMNTRIX Hunt we use behavior analytics and data science to identify covert channels and C2 threats. We do this by capturing and enriching full network packet data, which means an attack can be reconstructed to understand the full scope of the attack and, in turn, implement an effective remediation plan to stop the attacker from achieving their objective.

Once a threat has been dealt with, it is likely that policy changes or updated controls will be needed in order to prevent new attacks or reinfection of systems. This may include closing vulnerabilities, closing network ports, updating signatures, updating system configurations, modifying user permissions, updating user training or strengthening information protection options (such as encryption). With more advanced platforms it is possible to automatically generate new signatures/rules/patterns to address the newest advanced attacks — providing what is essentially a “custom defense.” However, before these are implemented, changes need to be modelled against the historical data gathered from continuous monitoring to minimise the occurrence of false positives and false negatives.

Our team works collaboratively with clients and partners to assist in defining the necessary changes to policies or any new controls that are needed to prevent new attacks. LMNTRIX does not make any of the changes nor do we sell or deploy any of the required controls.

After modelling and testing has ensured a change to be effective, the change must then be implemented. While some responses and policy changes can be automated and policy changes pushed to security policy enforcement points (firewalls, application control, anti-malware systems etc.) most enterprises prefer these changes to be implemented manually as these automated systems are only in their infancy.

At LMNTRIX we do not manage any clients’ security controls and as such we do not make any of the required changes nor do we sell or deploy any of the required controls. We expect our clients and partners to be responsible for these activities

Due to the constantly evolving nature of enterprise IT – whether in the form of continued introduction of new mobile devices and cloud-based services, the ephemeral nature of user accounts, the discovery of new vulnerabilities or the deployment of new applications –baselining must be continuous, as must the discovery of end-user devices, backend systems, cloud services, identities, vulnerabilities, relationships and typical interactions.

The LMNTRIX Respond (advanced endpoint threat detection capability) and LMNTRIX Hunt (advanced analytics capability) capabilities use automated baselining, however all other network baselining requirements as defined by Gartner need to be delivered by clients or partners.

While the ability to predict attacks is only emerging, it is a capability which will continue to grow in importance. By using reconnaissance of hacker marketplaces and bulletin boards together with the type and sensitivity of data being protected, this category is designed to proactively anticipate future attacks so that enterprises can adjust their security strategies accordingly. For example, if intelligence gathered indicates an attack on a specific application or OS is likely, an enterprise could proactively implement application firewalling protection, strengthen authentication requirements or proactively block certain types of access.

With the LMNTRIX Recon capability, we automatically DETECT cyberthreats in the open, deep and dark web by aggregating unique cyber intelligence from multiple sources. We then analyze the cyberthreats using proprietary data mining algorithms and enable remediation by translating our cyber intelligence into security actions.

With the LMNTRIX Intelligence capability we deliver earlier detection and identification of adversaries in your organization’s network by making it possible to correlate tens of millions of threat indicators against your real-time network activity logs. The LMNTRIX approach enables detection at any point during the attack lifecycle, making mitigation possible before there has been any material damage to your organization.

As intelligence – both internal and external – is being updated constantly, so too must an organization’s risk exposure evaluation. In some cases, this process of revaluation may necessitate adjustments to enterprise policies or controls. A common example is when new applications – whether enterprise or consumer – are discovered on devices on the enterprise network. The risk these applications pose to an organization must be evaluated and could potentially lead to additional controls such as application firewalls or even endpoint containment.

LMNTRIX Intelligence and Recon provide some of the necessary inputs for clients to assess risk and exposure against their assets. However, ultimately proactive exposure analysis is a client’s responsibility.